Constellation1 Broker Hub

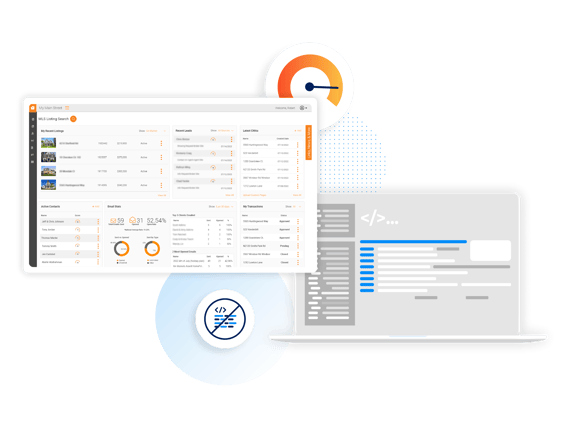

Our robust Broker Hub platform delivers a best-in-class real estate brokerage solution to manage every component of the deal, from website lead generation and relationship management to accounting and transaction management.

GET IN TOUCH

Fill in the form below and one of our dedicated team members will be in touch to learn more about your needs.

CONSTELLATION1 WEBSITES

Shine online

If You Can Dream It, We Can Do It

Constellation1 Broker Hub is a completely customizable, white-label real estate broker platform comprised of three key components: Public Apps, Public Websites, and Enterprise Software.

Public Apps

We offer industry-leading white-label native apps delivered through Apple App Store and Google Play, for iOS and Android.

Public Websites

Our lead management platform integrates best-in-class lead generation tools into hosted websites. Our sites offer full MLS integration along with favorites, saved searches, blogs, and community information.

Broker/Agent Enterprise Tools

Our platform provides documents, CRM, CMA, listings, transactions, and more. Our SAAS platform offers one of the most comprehensive integrated toolkits available.